Now, if your file contained only plain ASCII characters (Latin letters, Arabic numerals, and some punctuation - but no accents), then you can probably choose any encoding you like from the list, and it probably won't make any difference. RTF and plain-text are not formats of this type.)Īll that OO sees is a bunch of bytes it has no idea how to turn those into characters.

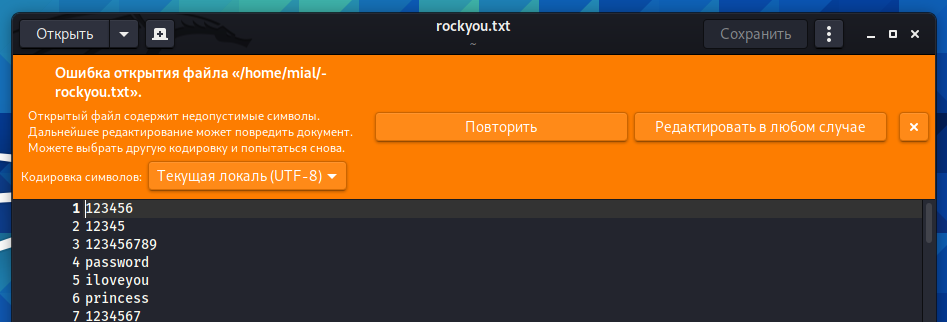

(To be fair, though, some file formats (like XML) do contain their encoding: but it has to be an encoding that lets you read far enough in the file to find the encoding, without interpreting any bytes incorrectly. Files don't store their encoding in the file (because most can't), and most filesystems don't store the encoding in the metadata either (though I believe Macs might). What OO is asking you is which of those encodings it should use when it interprets the bytes in the file you gave it. This ends up being able to encode all of the Unicode code points, but higher ones end up taking either 5 or 6 bytes to encode (or so), because you have to split up the bits between that many bytes, to leave enough room for the various flag bits. And once you get to the last byte of a given code point, there's some kind of signal in its upper bits that it is actually the last byte - this is for error checking. If the high bit is set in the first byte of a character, that means that more bytes are to come: the number of bytes to come depends on which of the highest few bits are set in the first. The first 128 code points are stored in a single byte, and are the same as in ASCII (so you can interpret an ASCII file as UTF-8), but the next byte values don't match any of the old 8-bit encodings. In UTF-8, each code point is split into a variable number of bytes. (You also can't take an ASCII file and interpret it as UTF-16, for the same reason as UTF-32.) UCS-2 does have a range of values that it can't use, though: these are the values that UTF-16 uses as the first of a combining character.) So it can only represent code points in the BMP this isn't enough. (This is where UTF-16 and UCS-2 differ: UCS-2 doesn't allow combining characters. The first - the ones that UTF-16 can represent directly - is the Basic Multilingual Plane, but it isn't quite big enough to store everything.) So most code points in UTF-16 are two bytes, but some are four. (In Unicode, each 16-bit code point group is a plane. In UTF-16, you store any code point below 65536 as-is, and use combining characters (that is, pairs of 16-bit units) to represent the code points in the other planes. And you can't take an ASCII file and interpret it as UTF-32, either: you'll be taking a group of four characters and trying to interpret them as one. So you're wasting (somewhere up to) 12 bits for every character, and even more than that if you're only using "low" code points (e.g. In UTF-32, you store each code point as it is, embedded in a 32-bit word. Possible encodings are UTF-32, UTF-16, UTF-8, and UCS-2/4 (AFAIK UCS-2 should not be used because it can't represent the entire Unicode character range, and UCS-4 is the same as UTF-32). Code point assignments for any other 8-bit encoding will not match.īut then again, when you create a file using Unicode, you don't store the code point directly as bytes in the file, either: you use a separate encoding that translates code point values to byte sequences. Code point assignments for ISO8859-1 characters may match, but I'm not sure if they actually do. "LATIN SMALL LETTER A") have the same numerical value as that character does in ASCII (e.g. Code points are (currently) up to 20-some bits long.Ĭode point assignments for ASCII characters (e.g. One is from a character to a code point - a given character always has the same code point value. There's also Unicode, which defines two different levels of conversion. KOI8-R assigns Cyrillic characters ISO8859-1 assigns various characters with accents for Western European languages, etc. So if you have a true 7-bit-ASCII file, it can be loaded using any of these character sets, and you'll get the right characters.) But none of them is compatible with the others: they all assign different characters to the byte values above 127. (The others are also "compatible" with ASCII, in that the first 128 bytes have the same characters as in ASCII. ASCII uses 7 bits (all defined ASCII characters' bytes have the highest bit clear), while the others use all 8 bits. All of the encodings mentioned above are alike in that they represent each character (that they're able to represent) in one byte or less.

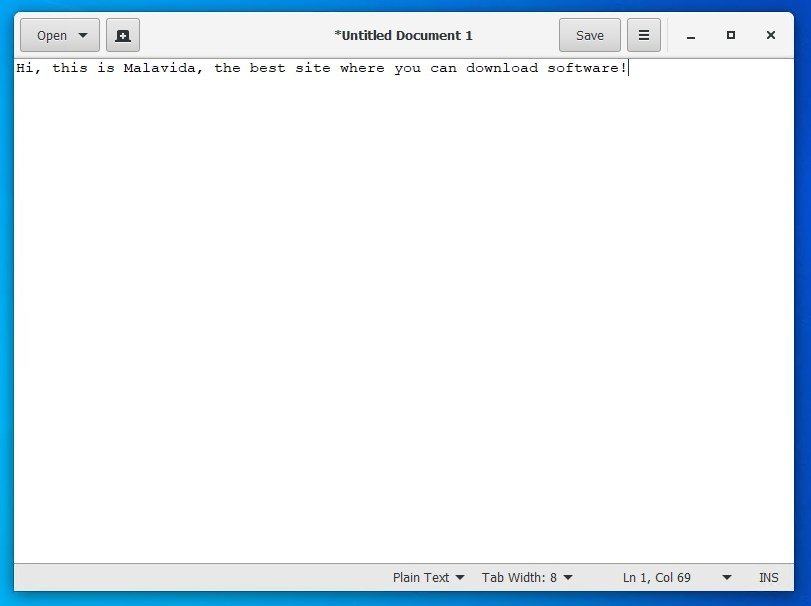

There are about a million different ways to encode characters into bytes. But why is it that when OOwriter open them that it asks about the character encoding etc, why not just open it up? Because, um, it doesn't know the character encoding?

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed